- Blog

- Keygen forza 5 pc download

- Install Openwrt On X86 Pc

- Free mac windows emulator

- Mejor configuracion de god of war ppsspp android

- Which dolphin emulator version is the best for mac

- What does a hole punched drivers license mean in california

- Saint etienne turnpike rarity

- Lakshya ko har haal me pana hai mp3 song download

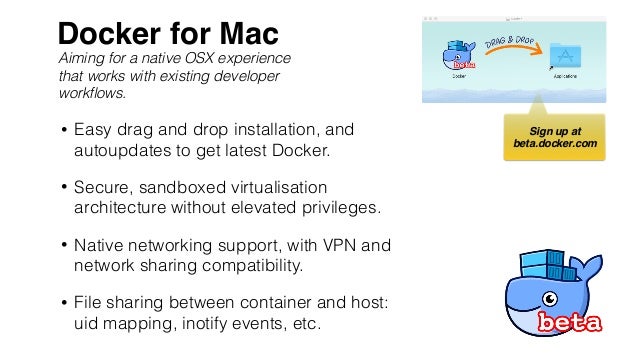

- Docker for mac file sharing

- Icecream screen recorder serial key 5-10

- YEP DAN PPI DANGDUT CLOWER DOWNLy

- Pills and potions instrumental

- Anime sousei no aquarion sub indo moon

- Bryton Bridge 2 Software

Here's how our stack from before looks with two extra levels of VM, and file-sharing: HFS+ for example is case insensitive (optionally), and the way file-system events (notifications about changes to files and directories) don't work quite the same, and probably other aspects I'm forgetting, such as whether or not files can be atomically replaced, or deleted whilst still being open, or whatever else on the long-tale of occasionally important behaviour.

Worse, because the invisible virtual machine (Linux), and the host use different file-systems (say EXT4 in the VM, and HFS+ in the host), not all concepts are portable. If we want to share a directory now, we need to share between macOS and the virtual machine, and from the virtual machine down to the Docker container. The important thing is though, that we no longer share any resources between the host and the VM. On macOS, it's xhyve, nothing spectacular or special about xhyve, as far as I'm aware, except it's bundled basically as part of macOS by Apple as amework, and xhyve just uses that. (the tech that virtualizes hardware, examples would be VMWare Fusion, Parallels, VirtualBox, etc) In theory there's no reason why this should prefer one hypervisor or another.

Docker for mac file sharing windows#

This, however isn't a story about Linux and Docker file sharing, this is about the situation on Mac, and possibly Windows (although I really have no idea about Windows).īecause macOS isn't Linux, they don't have the cgroups and namespace features which are what make Docker work, that makes it impossible to run Docker natively on any platform that is not Linux, to get around this limitation, Docker simply manages an "invisible" Linux virtual machine. When you do a FROM someimage:latest, and then you mount, or copy in files into the container, you're creating a two or three layer deep stack, and Docker takes care of making sure all your pancake layers are there when you need them. The story doesn't change much if using Docker shared volumes mapped between the container and the host because the partitioning of the filesystem that is visible to the container is all magic of kernel namespacing and clever use of "overlay" filesystems, which are a way to "stack" multiple, possible sparse directories on top of one another to give a union view of all the parts of the stack. In that scenario the file-system stack looks something like this: This post is a quick attempt to set-up some thoughts about file-systems, and the performance characteristics we expect, specifically looking at shared file-systems for development environments, Docker to be precise.ĭocker on Linux is a very thin wrapper over CGroups and Kernel Namespaces, the performance is so close to the native performance of the underlying file-system, and the underlying hardware that there's barely anything to discuss. Solid State Drives (SSDs) cover all our our common use-cases, and by virtue of being absolutely screaming fast, file-systems in general were propelled a decade into the future "by magic" because of the underlying technology. In the contemporary age of computing, there's a handful of file-systems, and the most common features have more-or-less settled-in, and there's not nearly as much innovation as there used to be, and for most of us, most of the time, that's just fine. POSIX file-systems (most of the ones you know and love) even moreso, as POSIX specifies some guarantees about what it means to take certain operations, and those requirements are fairly rigid.

File-systems are tricky in general, that is a statement we need to make up-front and unambiguously.

- Blog

- Keygen forza 5 pc download

- Install Openwrt On X86 Pc

- Free mac windows emulator

- Mejor configuracion de god of war ppsspp android

- Which dolphin emulator version is the best for mac

- What does a hole punched drivers license mean in california

- Saint etienne turnpike rarity

- Lakshya ko har haal me pana hai mp3 song download

- Docker for mac file sharing

- Icecream screen recorder serial key 5-10

- YEP DAN PPI DANGDUT CLOWER DOWNLy

- Pills and potions instrumental

- Anime sousei no aquarion sub indo moon

- Bryton Bridge 2 Software